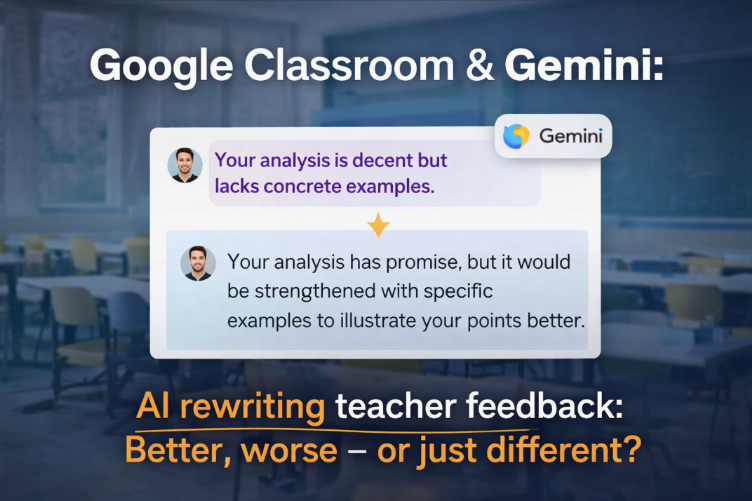

Google has begun testing a new feature in Classroom: an AI, based on Gemini, that can rewrite teacher feedback and comments for students.

The news sounds like another example of "AI automating tasks." But if we pause for a moment and look beneath the surface, what's happening here is part of a deeper transformation in how we communicate, teach, and interpret human language in digital systems.

Until recently, in an environment like Classroom, a teacher would write a comment, a student would read it and react. It was direct, human, and specific. With the introduction of a generative model that rewrites feedback, Google is proposing an interface in which language is no longer simply the transmission of a person's thoughts, but becomes a product "interpreted" by a model that applies style, tone, and structure.

In practice, a teacher might write a rough thought—for example, "Your analysis is good, but it lacks concrete examples"—and the AI reformulates it into a more detailed, more positive, or more pedagogical version.

This immediately leads to two reflections:

1. AI doesn't just write "for you."

The reformulation function isn't an automatic replacement for a human. It's a language assistant. In educational environments—where clarity, empathy, and tone are crucial—AI can help translate a thought into a more effective expression, one that respects grammatical rules, or is more consistent with certain communication standards.

But there's a fine line: the intent remains human. If the system simply rewrites without understanding the original message, it risks transforming what was personal and specific into something generic.

2. It changes the communication relationship.

When an AI rewrites human feedback, it also changes the way students and teachers perceive that feedback. It might become more readable and well-structured, but it might also lose nuance or a personal tone. The result could be "more beautiful" language, but perhaps less authentic.

If I think of a school, a classroom, or even an online learning platform, the central question isn't "Can AI do it?"... but rather:

"In what cases is it useful for AI to reframe human thought, and in what cases is direct feedback more valuable than good writing?"

No one is taking away teachers' authority.

It's important to emphasize this: this function doesn't replace the teacher. AI isn't correcting or deciding a grade. It's a tool that helps better express a concept.

There are already great examples of similar tools in word processors: automatic suggestions, rewrites, tone control, etc. In Google Classroom, the novelty is that this assistance arrives in an educational environment, contextualized to a student's performance.

Implications for Teaching and Beyond

If a teacher can focus more on the content of feedback rather than its linguistic form, AI can alleviate some of the "communication fatigue." This makes sense, especially in systems with a high workload.

But we must also look beyond the classroom. The same concept applies:

to customer support rewriting responses,

to HR systems generating employee feedback,

to automatic content moderation.

In each of these cases, there is one constant: meaning is mediated by a linguistic model, not transmitted directly.

In Conclusion

Google Classroom's Gemini feature isn't just "AI that writes for you."

It's a step toward a deeper collaboration between human language and generative language.

This changes expectations about the quality of text, how feedback is given, and how dialogue is structured in digital environments.

Platforms are beginning to understand that form is as important as substance, and that good language facilitates learning and understanding.

It's not a distant future. It's here, now.